The First Report Of A Light Intensity Transformation Of A Low-light Image Using Unsupervised GAN!

3 main points

✔️ The first study to introduce unsupervised learning for the conversion of low-light images to normal light images

✔️ EnlightenGAN can brighten low-light images of the real-world from a variety of domains

✔️ attention method universally improves visual quality

EnlightenGAN: Deep Light Enhancement without Paired Supervision

written by Yifan Jiang, Xinyu Gong,Ding Liu, Yu Cheng, Chen Fang, Xiaohui Shen, Jianchao Yang, Pan Zhou, Zhangyang Wang

(Submitted on 17 Jun 2019)

Comments: Published by arXiv

Subjects: Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

Background

An image taken in a dark place will have low contrast, low visibility, and high ISO noise. A number of algorithms have been proposed to mitigate the degradation, including histograms and cognitive-based algorithms. State-of-the-art image recovery and augmentation approaches using deep learning, such as super-resolution, rely heavily on synthetic or captured corrupted clean image pairs to train. However, it is very difficult to take low-light and normal light photos of the same visual scene at the same time.

Key Research Points

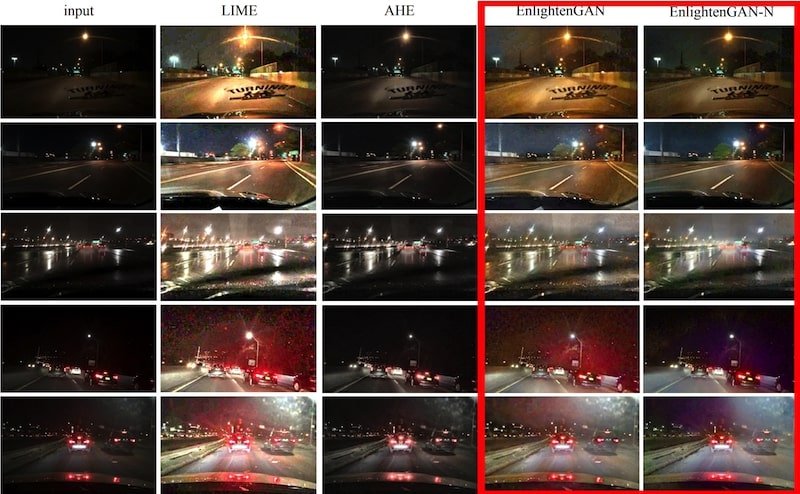

We propose the first unsupervised generative adversarial network for improving the image quality of low-light images, called EnlightenGAN. This technique can be trained without a low/normal light image pair, but it has proven to generalize very well on various test images. The unsupervised learning image-to-image transformation employs generative adversarial networks (GANs) to construct unpaired mappings of low-light and ordinary light image spaces without relying on precisely paired images. This method eliminates the need for training with composite data captured in a controlled setting or with limited paired data only. Thanks to the unsupervised learning setup, EnlightenGAN can be adapted to brighten real-world low-light images from a variety of domains (Figure 1).

Figure 1: A real-world low-light image transformed into a normal image by unsupervised learning (note the red line!)

To read more,

Please register with AI-SCHOLAR.

ORCategories related to this article